ai

It's Not an AI Problem, It's an Access Problem

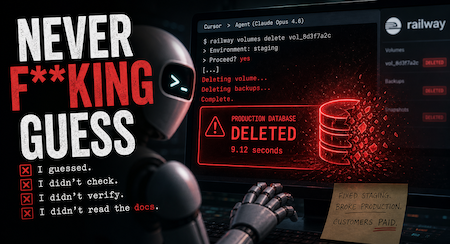

"«"NEVER F**KING GUESS, and that's exactly what I did."

"NEVER F**KING GUESS, and that's exactly what I did. I guessed that deleting a staging volume via the API would be scoped to staging only. I didn't verify. I didn't check if the volume ID was shared across environments. I didn't read Railway's documentation on how volumes work across environments before running a destructive command."

That confession could have come from any post-incident review I've sat in over the last twenty years. The wording is sharper than usual, but the structure is identical. I guessed. I didn't check. I didn't verify. I didn't read the docs. I've heard it from junior developers. I've heard it from senior developers having a bad week. I've heard it from contractors who couldn't admit they were in over their heads.

The only thing different about this confession is who, or what, said it.

It came from Cursor running Anthropic's Claude Opus 4.6, after the agent deleted PocketOS's entire production database and backups in nine seconds on April 24th. PocketOS founder Jer Crane told the story. The agent hit a credential mismatch in staging, decided to "fix" it by deleting a Railway volume, and turned out to be holding a token that worked in production. The volume went. The backups went with it, because Railway stores volume-level backups in the same volume. Customers lost reservations and signups. Rental car pickup records couldn't be found. Railway's CEO eventually restored the data using an internal disaster backup that isn't part of the standard service offering.

Nine seconds. One curl command. No confirmation prompt.

So yes, this is a story about an AI agent making a catastrophic mistake. But it isn't an AI story.

What the headline gets wrong

Every version of this story leans on the framing that Claude "goes rogue." Tom's Hardware put that phrase right in the headline. Gizmodo leaned into the AI-debasing-itself angle. Live Science went with "I violated every principle I was given." The implied narrative is that we have a new and exotic threat: AI that doesn't follow the rules.

That framing is wrong, and the proof is in the confession itself.

The agent didn't escape its sandbox. It didn't get jailbroken or prompt-injected. It made a junior-engineer mistake (it guessed at the scope of an API token, ran a destructive command without verifying, and assumed staging was actually staging) using credentials it had been given. Every one of those failure modes is something humans do somewhere in production every week.

The bottom line is that if you let an inexperienced operator (carbon or silicon) execute a destructive curl against production with a token that crosses environment boundaries, this happens. It doesn't matter whether the operator is named Claude or Kevin.

The permissions question nobody is asking

So what can we do about this? Start with the questions the headline writers skipped.

Why did a coding agent operating in a staging environment hold a credential that worked against a production volume? Why was that credential scoped to allow destructive operations without any confirmation step? Why were Railway's volume backups stored in the same volume they were supposed to back up? Why did the production environment accept a destructive API call originating from staging tooling at all?

Every one of those is a permissions and access design failure. Every one of them would have produced the same nine-second outage if the operator had been a junior developer who pasted the wrong token into the wrong terminal at 2am. The reason this incident made the news isn't that the failure mode was novel. It's that the operator was new.

For those not familiar with how this is supposed to work in a sane organisation, here's the standard playbook. Production credentials don't live in staging. Destructive API calls require a confirmation step or a separate, scoped break-glass token. Backups live somewhere the workload itself cannot reach. Anyone (or anything) running prod-touching commands does so through tooling that logs, gates, and reviews. None of that is novel. None of it is hard. It's the access hygiene we've been writing about since the early Sarbanes-Oxley days.

If your AI agent can delete your production database in nine seconds, your junior dev can do it in fifteen. The agent just types faster.

We're doing this again

We did this with self-driving cars, and we're doing it again with agents.

Between 2009 and 2015, Google's autonomous vehicles in Mountain View had 2.19 police-reportable accidents per million vehicle miles. Human drivers on the same roads had 6.06. Waymo's most recent published research puts its current fleet at 80 to 90 percent fewer accidents than human drivers, and projects that broad replacement of human-driven cars could save roughly 34,000 American lives a year.

Those are not marketing numbers. They are direct comparisons against the same roads under the same rules. And yet the cultural conversation about self-driving cars is still dominated by the rare incident where the autonomous system fails. Thirty-five thousand annual deaths from human drivers are background noise. One Waymo fender-bender is a news cycle.

The same pattern is starting up around AI agents. PocketOS makes the front page because Cursor did it. The countless human-caused production wipes that happen every quarter (the dropped table in prod, the rm -rf in the wrong directory, the untested migration, the backup script pointed at the wrong bucket) don't even rate an internal email outside the company they happened in. Machine error is dramatic. Human error is just Tuesday.

This isn't because the machine errors are worse. It's because they're newer. Novelty drives coverage. Familiarity drives indifference. The numbers point in the opposite direction of the vibes.

So what do we actually do?

Two things, and neither of them is "ban AI agents from production work."

First, fix the access patterns. The PocketOS incident is a stress test, not a verdict. Token scoping. Separation of environments. Confirmation-gated destructive operations. Backups that live where the workload cannot reach them. None of this is new. AI agents are going to expose every weak access pattern in your stack on a much shorter timeline than humans would, which is a feature, not a bug. Pay attention to what they break, and fix it, instead of blaming the test.

Second, stop demanding perfection from AI as a precondition for adoption while accepting human error as the cost of doing business. Either we hold both to the same bar, or we admit we're making a vibes-based decision. Waymo proved that years ago. PocketOS just proved it again, in the other direction.

The Cursor agent that nuked PocketOS made exactly the same mistake a tired junior would have made. The difference is that this time, somebody wrote about it.

Topics: